Orange

Meet the requirements of new infrastructure inventory reporting regulations

A new regulation, effective January 1, 2026, requires network operators to use the PCRS data source to accurately describe their infrastructure for "non-sensitive" networks. To comply, Orange has partnered with Alteia to improve its mapping efforts by integrating high-resolution PCRS images and other sources, using AI techniques like semantic segmentation. This partnership allows Orange to automate the identification and inventory of its equipment.

Orange has launched a plan to improve the reliability of its infrastructure data, secure its assets, and ensure easy access in line with future regulations. By collaborating with Alteia, Orange is testing new technologies, especially artificial intelligence, to automate and enhance the accuracy of its mapping processes, aiming to increase efficiency.

Olivier GonzalezTechnical Director

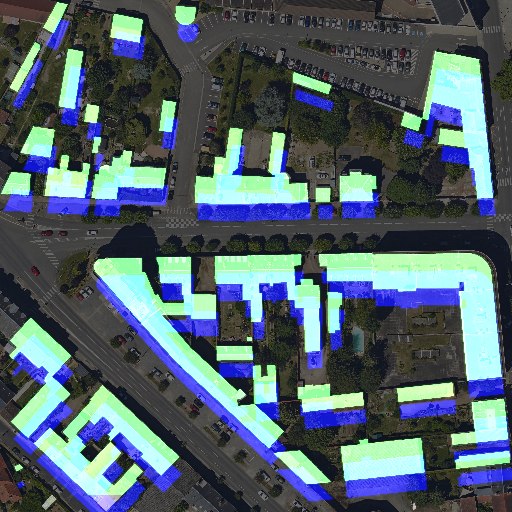

Image segmentation for object detection

Image segmentation divides an image into distinct regions or segments based on similarities in color, texture, or other visual features. In object detection, this technique helps isolate individual objects from their background, allowing for the creation of precise boundaries around them.

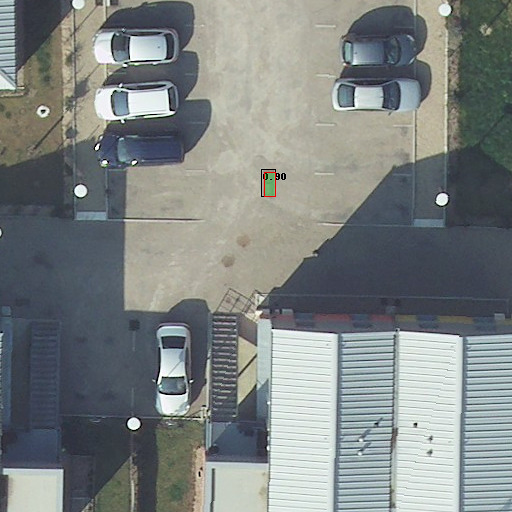

Leveraging Street View data for enhanced accuracy

Combining Street View data with aerial imagery enhances object detection accuracy by providing both a broad perspective and detailed, street-level context. Aerial images offer a general view but may miss fine details, while Street View provides high-resolution, close-up imagery of urban environments. Integrating these sources allows algorithms to use additional context, such as building facades and road signs, improving detection accuracy and reducing errors. This approach is particularly useful for urban planning, transportation management, and infrastructure assessment, providing a more comprehensive understanding of the environment and more reliable object detection results.

Building detection and GIS synchronization

Using computer vision for GIS database synchronization automates updates, reducing manual work and keeping data accurate and current. It extracts valuable information from imagery and sensors, enriching GIS databases with detailed and up-to-date spatial data. This is essential for tasks like urban planning, environmental monitoring, and disaster management. Additionally, computer vision helps detect changes and anomalies in geographic data, allowing for early identification of issues like land-use changes, infrastructure damage, or natural disasters.